When the Mind Isn't the Model

The conversation around large language models (LLMs) has been dominated by a single question: "Are they intelligent?" But this framing misses the point. The real breakthroughs in AI will come not from debating the nature of machine consciousness, but from building thoughtful, governed systems where LLMs act as reliable components, not autonomous minds.

The Illusion of Autonomy

The apparent capabilities of LLMs can create a false impression of independent agency. But the truth is, these models are powerful tools that require careful design, oversight, and control to be used safely and effectively. AI governance and safety must be at the forefront, ensuring that LLMs are embedded within larger systems that maintain human oversight and the ability to refuse or override model outputs.

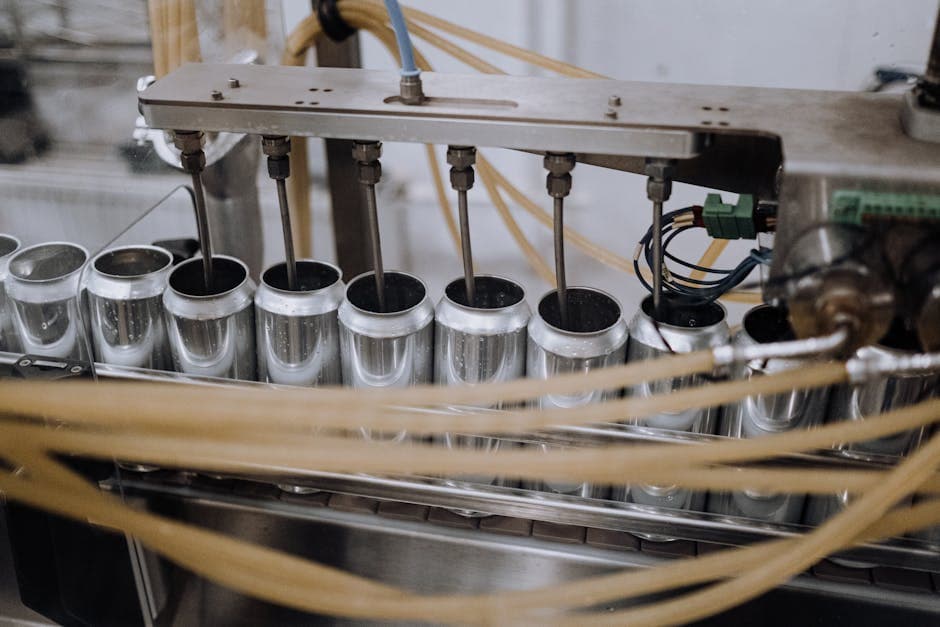

Patterns in the Scaffolding

Responsible AI systems design is not about building "smarter" models, but rather constructing the scaffolding that enables LLMs to be leveraged as trustworthy components. Key elements include transparent model provenance, clear task boundaries, robust prompt engineering, and comprehensive monitoring and auditing. By focusing on these structural elements, we can unlock the true potential of large language models without succumbing to the illusion of machine autonomy.

Humans in the Loop

Humans are not just end-users of AI systems, but critical actors within the loop. Effective AI governance requires well-defined roles, responsibilities, and workflows for operators, supervisors, and other stakeholders. This includes processes for human review, override, and escalation, as well as clear guidelines for model limitations and appropriate use cases. By empowering humans to be active participants in the system, we can ensure that LLMs are deployed safely and in service of our shared goals.

Towards a Future of Responsible AI

The shift in mindset from "LLMs as minds" to "LLMs as components" unlocks a new era of trustworthy, impactful AI applications. By focusing on the system around the model, we can harness the power of large language models while maintaining human agency, oversight, and control. This is the true breakthrough in AI - not the models themselves, but the responsible, refusal-first frameworks we build to govern them.